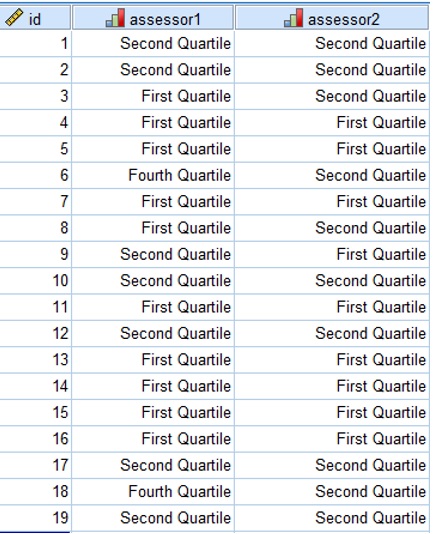

![PDF] Selection and Reporting of Statistical Methods to Assess Reliability of a Diagnostic Test: Conformity to Recommended Methods in a Peer-Reviewed Journal | Semantic Scholar PDF] Selection and Reporting of Statistical Methods to Assess Reliability of a Diagnostic Test: Conformity to Recommended Methods in a Peer-Reviewed Journal | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/b9fe163b52c4d4ccc5b1ad9668f80aea6ee34a70/3-Table1-1.png)

PDF] Selection and Reporting of Statistical Methods to Assess Reliability of a Diagnostic Test: Conformity to Recommended Methods in a Peer-Reviewed Journal | Semantic Scholar

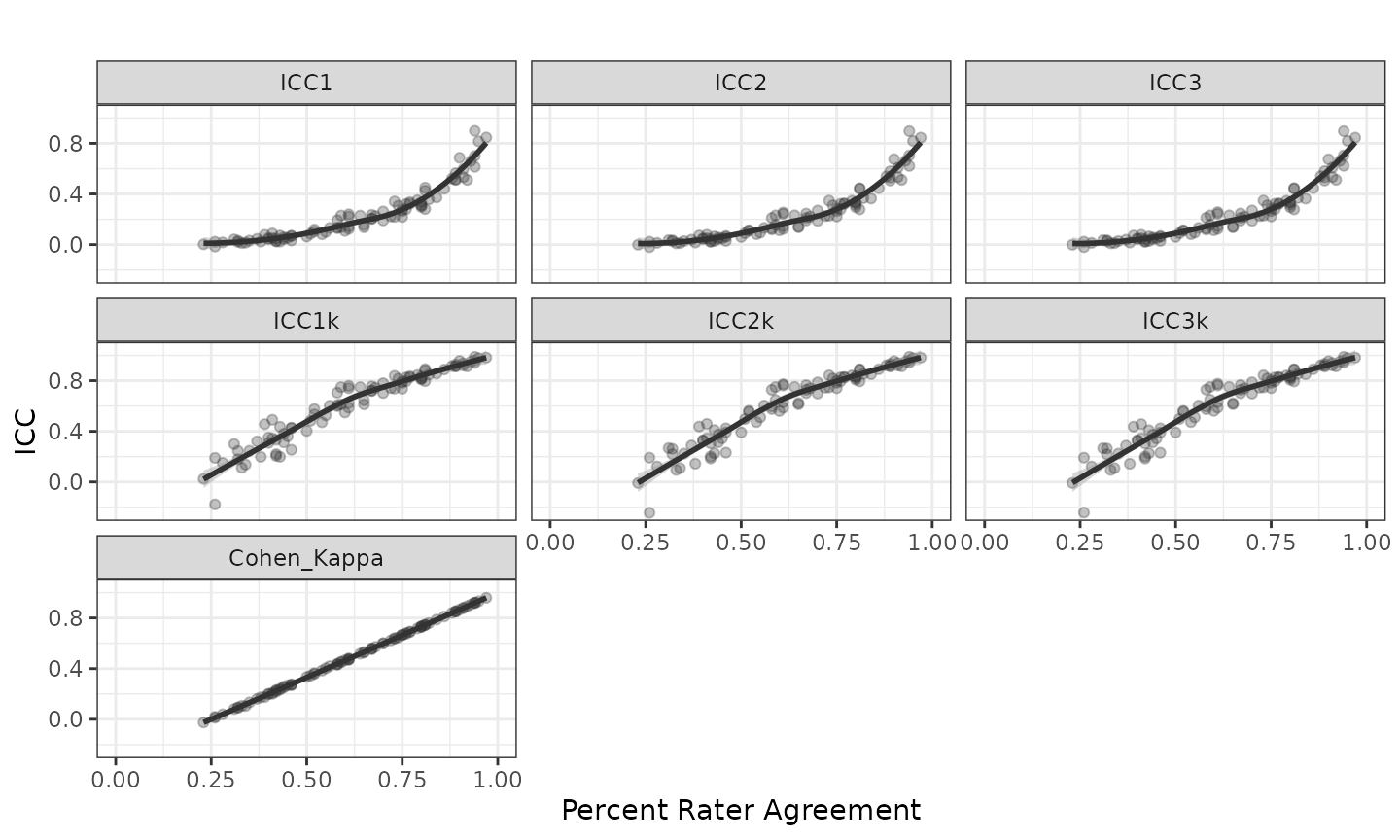

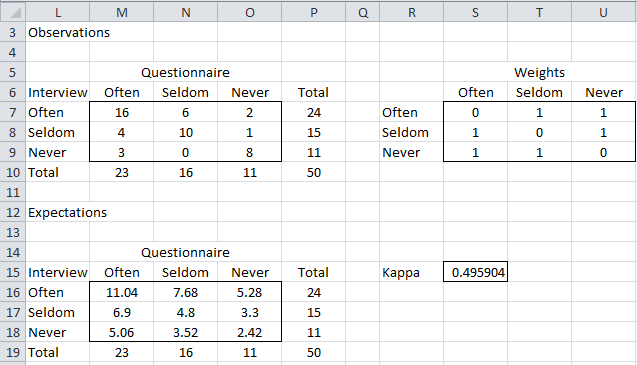

of results (percent agreement). Cohen's kappa statistic (κ) - degrees... | Download Scientific Diagram

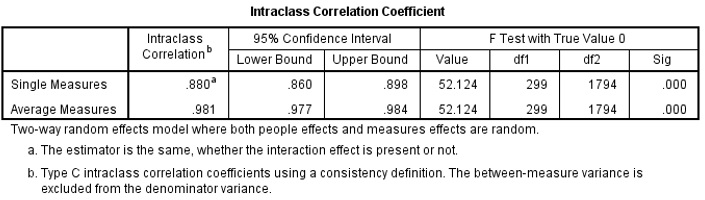

2005 All Hands Meeting Measuring Reliability: The Intraclass Correlation Coefficient Lee Friedman, Ph.D. - ppt download

The Equivalence of Weighted Kappa and the Intraclass Correlation Coefficient as Measures of Reliability - Joseph L. Fleiss, Jacob Cohen, 1973

Table 4 from Percent Agreement, Pearson's Correlation, and Kappa as Measures of Inter-examiner Reliability | Semantic Scholar